#FakingIt: Russian Cyber Operations in the 2016 Presidential Campaign By Austen Givens

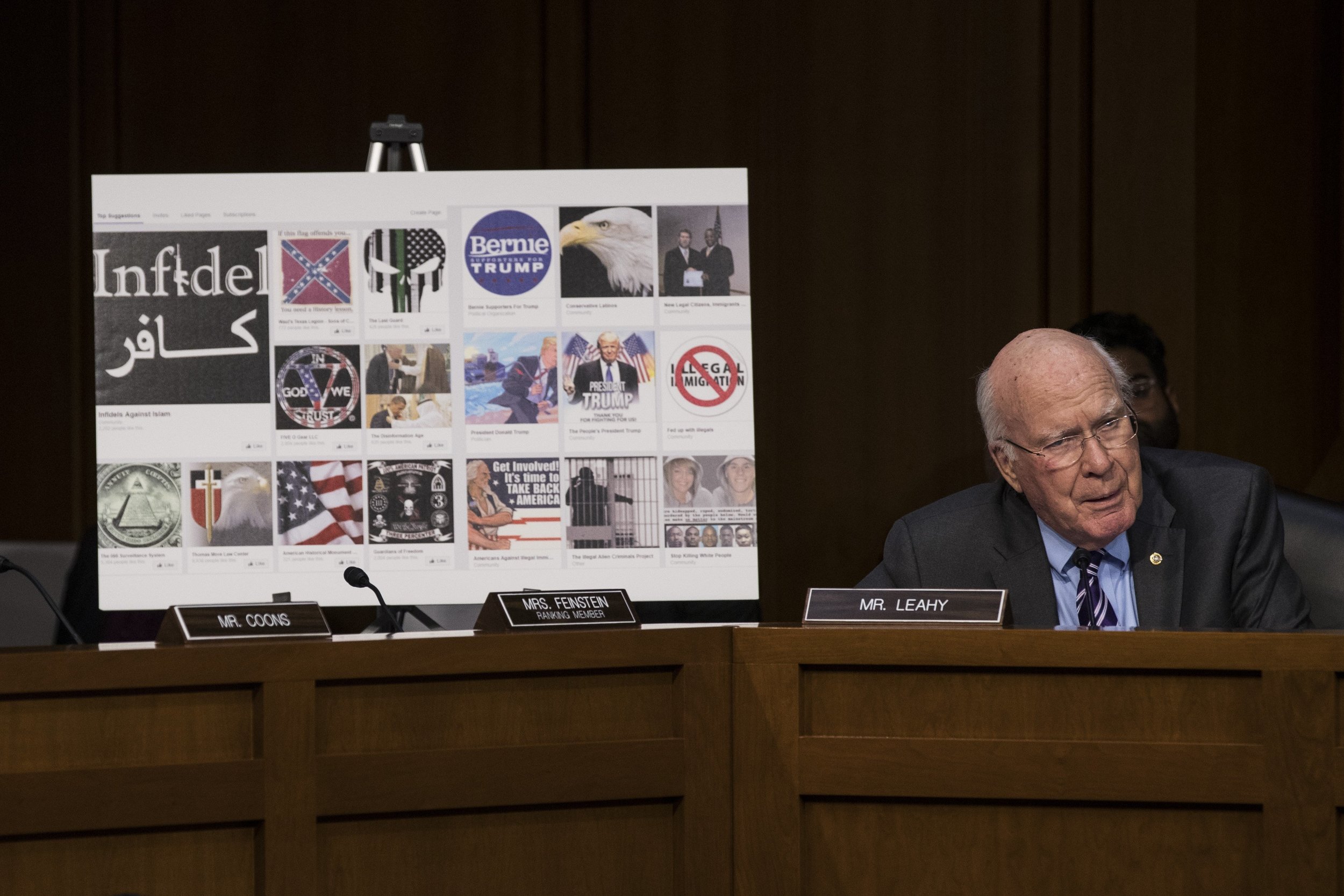

Last week leaders from Facebook, Twitter, and Google testified on Capitol Hill about the roles their respective firms played in skewing the results of the 2016 Presidential Election. These companies say that they unwittingly hosted numerous political advertisements, cooked up in the Kremlin, to damage Hillary Clinton’s prospects of winning the election. Evidence suggests that fake user accounts on Facebook and Twitter helped sway public opinion related to the election, too.

Photo by Wall Street Journal

All of this points toward two key questions that have captured my interest, and that of other scholars in the cybersecurity field: how many fake user accounts exist on social media websites? And how many of these accounts are controlled by Moscow?

The answers to both of these questions depend upon the platform, really. But it seems clear that Twitter hosts far more fake accounts than other social media services. So, I am going to focus on Twitter here.

A study published by researchers at the University of Southern California and Indiana University this year estimated that 9 to 15 percent of all Twitter accounts are bots, not real people.

Statistia, a market research website, notes that in Q3 2016—around the time of the 2016 Presidential Election—Twitter had a total of 313 million active monthly users. (This figure distinguishes between active users, meaning those who log in and engage with content, and inactive users, who have user accounts but never log in.)

If we assume that these researchers’ estimates are accurate, then there were at a minimum about 28 million fake active users on Twitter during the 2016 Presidential Election.

And if we further estimate that just 10% of that total was made up of Russian bots, then about 2.8 million Twitter accounts acted as Russian government agents during the 2016 Presidential Election.

Those 2.8 million Russian bots clearly preferred Donald Trump over Hillary Clinton. They also had profound reach and influence. For example, a Wall Street Journal analysis found that during the early stages of the 2016 presidential race, the ratio of praise-to-criticism tweets among then-candidate Donald Trump’s Twitter followers was about 10 to 1. In the months leading up to the 2016 election, several Russian Twitter bots amassed more than 50,000 followers each. Their tweets were featured in prominent media outlets, including The New York Times and Washington Post.

Today President Trump boasts 42.4 million followers on Twitter. But according to TwitterAudit, a service that evaluates Twitter profiles for authenticity, up to 53% of them—22.3 million—are probably fake.

Given these numbers—estimates, to be sure—it becomes difficult to find fault with the U.S. Intelligence Community’s assessment that Moscow’s efforts to influence the 2016 Presidential Election were effective, at least in the eyes of Russian leaders.

So, what can be done about Russian social media bots?

Ongoing research may help to reduce the number of phony online accounts. For example, a 2012 study by one group of computer scientists used a sample of 62 million Twitter accounts to develop an algorithm to spot fake Twitter users.

Two complementary studies, both published in 2015, built upon these findings. The first leveraged algorithms commonly employed to detect spam emails to identify bogus Twitter followers. The second analyzed profile creation times and status update intervals to spot fraudulent accounts.

Research like this may lead social media sites like Facebook, Instagram, and Twitter to develop proprietary software that indexes and deletes fake users automatically.

That would go a long way toward countering future Russian cyber operations.

Austen D. Givens is Assistant Professor of Cybersecurity at Utica College. He tweets, seriously, at @GivensAD.